There are several ways to evaluate the quality of machine translation:

- Human evaluation: One way to evaluate machine translation is to have a human translator compare the machine-translated version of a text with the original text and determine the accuracy of the translation.

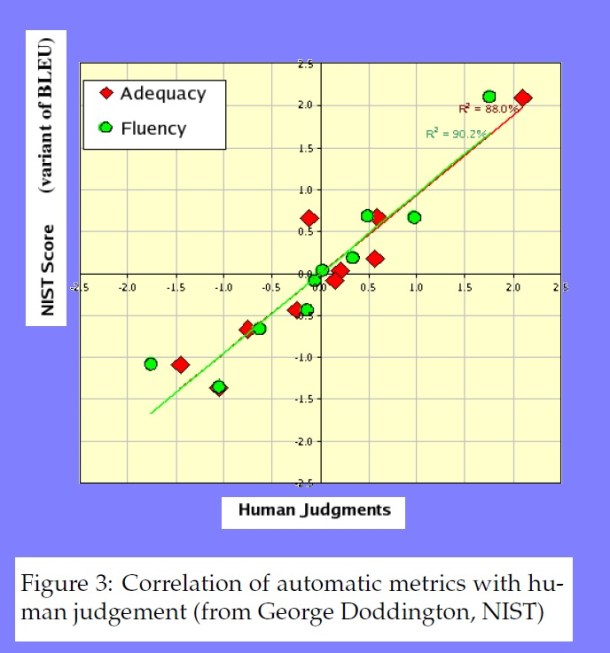

- Automatic evaluation metrics: There are several automatic evaluation metrics that can be used to measure the quality of machine translation, such as BLEU (Bilingual Evaluation Understudy) and TER (Translation Error Rate). These metrics compare the machine-translated text to a reference translation and provide a score based on the similarity between the two.

- User feedback: Another way to evaluate machine translation is to gather feedback from users who have used the translation service. This can provide insight into the overall usability and effectiveness of the service.

- Comparison with human translation: Machine translation can also be evaluated by comparing it to a human translation of the same text. This can provide a baseline for the quality of the machine translation and allow for a more detailed analysis of its strengths and weaknesses.

Leave a Reply